I like to think of myself as a rational person, but I’m not one. The good news is it’s not just me — or you. We are all irrational, and we all make mental errors.

For a long time, researchers and economists believed that humans made logical, well-considered decisions. In recent decades, however, researchers have uncovered a wide range of mental errors that derail our thinking. Sometimes we make logical decisions, but there are many times when we make emotional, irrational, and confusing choices.

Psychologists and behavioral researchers love to geek out about these different mental mistakes. There are dozens of them and they all have fancy names like “mere exposure effect” or “narrative fallacy.” But I don’t want to get bogged down in the scientific jargon today. Instead, let’s talk about the mental errors that show up most frequently in our lives and break them down in easy-to-understand language.

Here are five common mental errors that sway you from making good decisions.

1. Survivorship Bias.

Nearly every popular online media outlet is filled with survivorship bias these days. Anywhere you see articles with titles like “8 Things Successful People Do Everyday” or “The Best Advice Richard Branson Ever Received” or “How LeBron James Trains in the Off-Season” you are seeing survivorship bias in action.

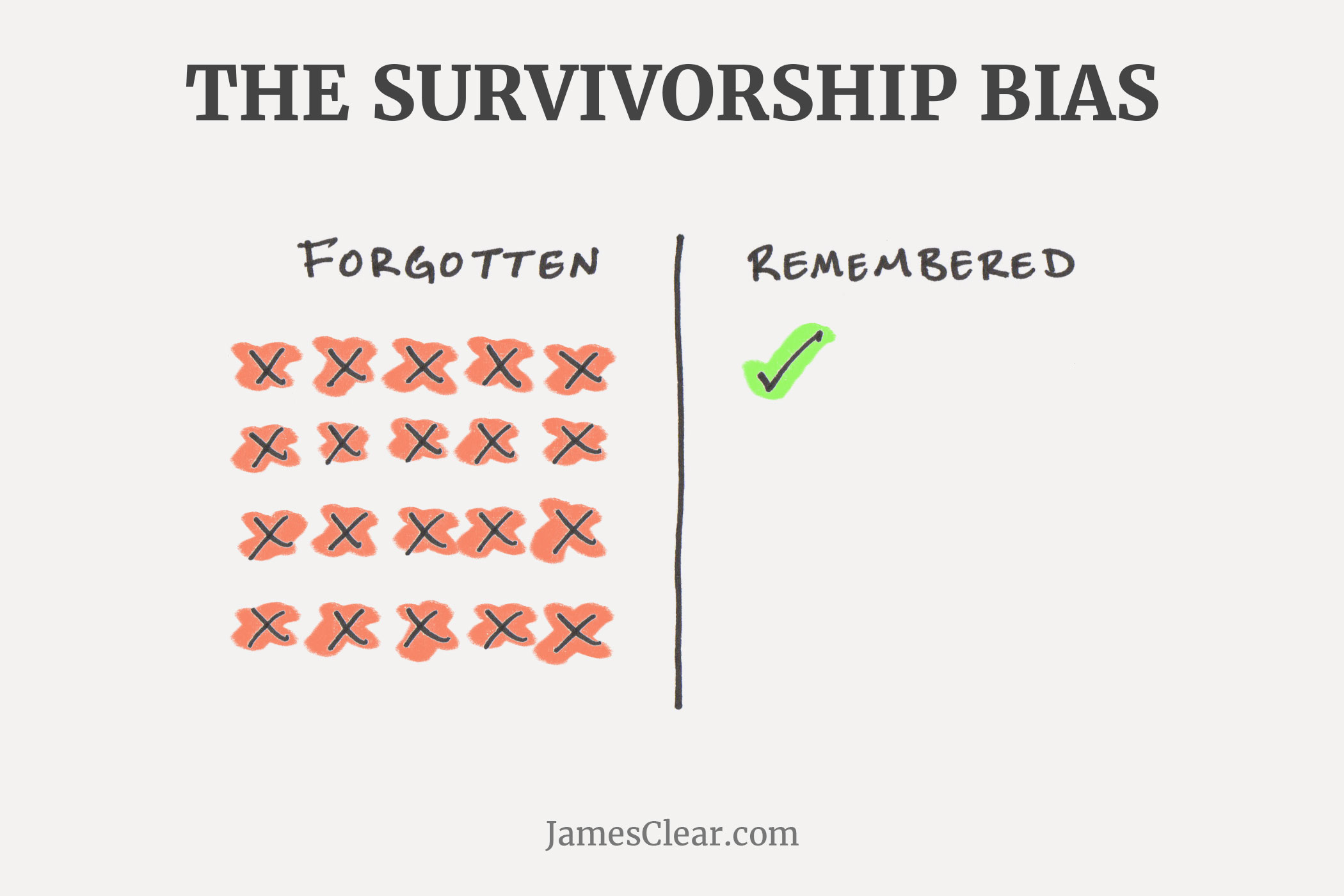

Survivorship bias refers to our tendency to focus on the winners in a particular area and try to learn from them while completely forgetting about the losers who are employing the same strategy.

There might be thousands of athletes who train in a very similar way to LeBron James, but never made it to the NBA. The problem is nobody hears about the thousands of athletes who never made it to the top. We only hear from the people who survive. We mistakenly overvalue the strategies, tactics, and advice of one survivor while ignoring the fact that the same strategies, tactics, and advice didn’t work for most people.

Another example: “Richard Branson, Bill Gates, and Mark Zuckerberg all dropped out of school and became billionaires! You don’t need school to succeed. Entrepreneurs just need to stop wasting time in class and get started.”

It’s entirely possible that Richard Branson succeeded in spite of his path and not because of it. For every Branson, Gates, and Zuckerberg, there are thousands of other entrepreneurs with failed projects, debt-heavy bank accounts, and half-finished degrees. Survivorship bias isn’t merely saying that a strategy may not work well for you, it’s also saying that we don’t really know if the strategy works well at all.

When the winners are remembered and the losers are forgotten it becomes very difficult to say if a particular strategy leads to success.

2. Loss Aversion.

Loss aversion refers to our tendency to strongly prefer avoiding losses over acquiring gains. Research has shown that if someone gives you $10 you will experience a small boost in satisfaction, but if you lose $10 you will experience a dramatically higher loss in satisfaction. Yes, the responses are opposite, but they are not equal in magnitude. 1

Our tendency to avoid losses causes us to make silly decisions and change our behavior simply to keep the things that we already own. We are wired to feel protective of the things we own and that can lead us to overvalue these items in comparison with the options.

For example, if you buy a new pair of shoes it may provide a small boost in pleasure. However, even if you never wear the shoes, giving them away a few months later might be incredibly painful. You never use them, but for some reason you just can’t stand parting with them. Loss aversion.

Similarly, you might feel a small bit of joy when you breeze through green lights on your way to work, but you will get downright angry when the car in front of you sits at a green light and you miss the opportunity to make it through the intersection. Losing out on the chance to make the light is far more painful than the pleasure of hitting the green light from the beginning.

3. The Availability Heuristic.

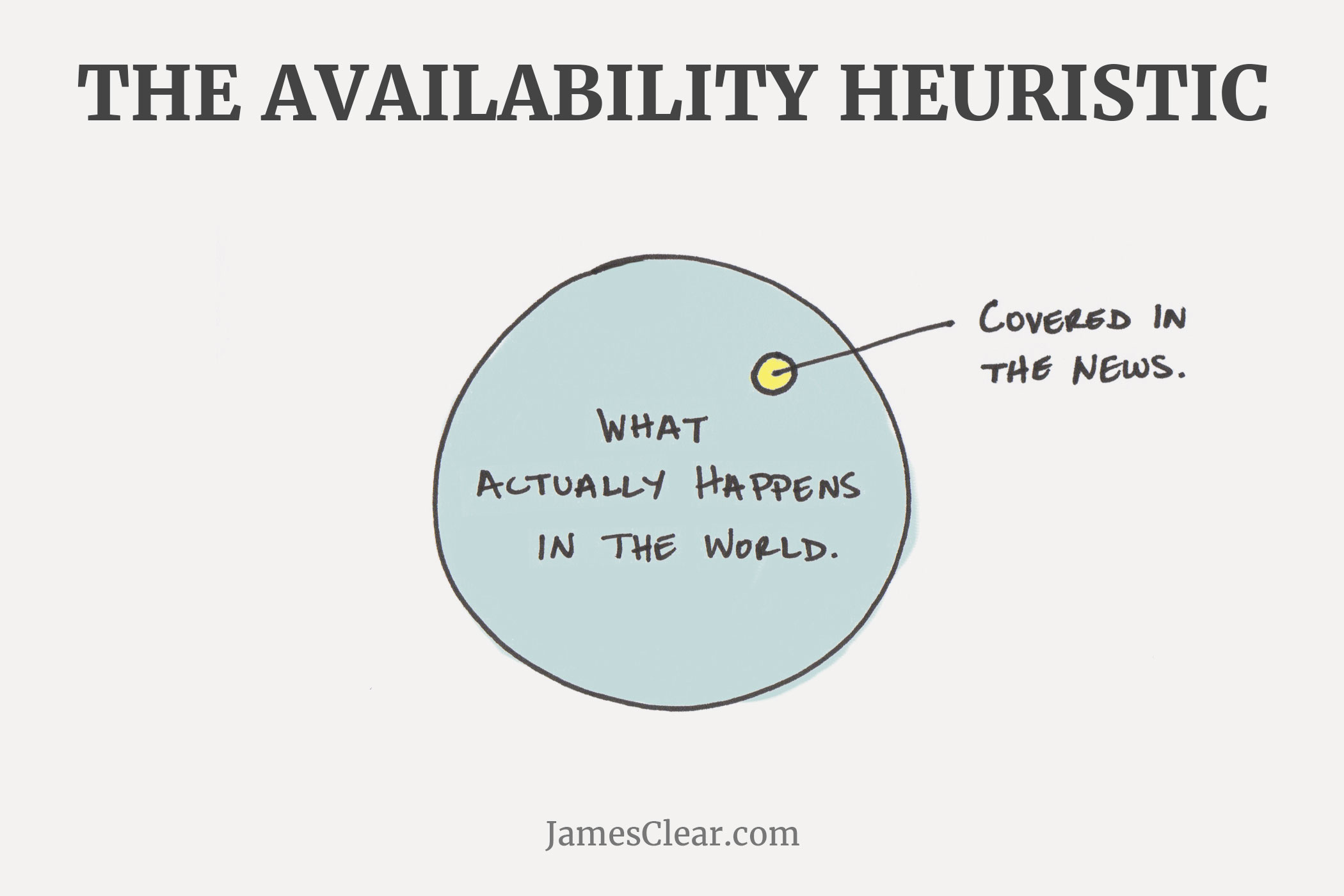

The Availability Heuristic refers to a common mistake that our brains make by assuming that the examples which come to mind easily are also the most important or prevalent things.

For example, research by Steven Pinker at Harvard University has shown that we are currently living in the least violent time in history. There are more people living in peace right now than ever before. The rates of homicide, rape, sexual assault, and child abuse are all falling.2

Most people are shocked when they hear these statistics. Some still refuse to believe them. If this is the most peaceful time in history, why are there so many wars going on right now? Why do I hear about rape and murder and crime every day? Why is everyone talking about so many acts of terrorism and destruction?

Welcome to the availability heuristic.

The answer is that we are not only living in the most peaceful time in history, but also the best reported time in history. Information on any disaster or crime is more widely available than ever before. A quick search on the Internet will pull up more information about the most recent terrorist attack than any newspaper could have ever delivered 100 years ago.

The overall percentage of dangerous events is decreasing, but the likelihood that you hear about one of them (or many of them) is increasing. And because these events are readily available in our mind, our brains assume that they happen with greater frequency than they actually do.

We overvalue and overestimate the impact of things that we can remember and we undervalue and underestimate the prevalence of the events we hear nothing about. 3

4. Anchoring.

There is a burger joint close to my hometown that is known for gourmet burgers and cheeses. On the menu, they very boldly state, “LIMIT 6 TYPES OF CHEESE PER BURGER.”

My first thought: This is absurd. Who gets six types of cheese on a burger?

My second thought: Which six am I going to get?

I didn’t realize how brilliant the restaurant owners were until I learned about anchoring. You see, normally I would just pick one type of cheese on my burger, but when I read “LIMIT 6 TYPES OF CHEESE” on the menu, my mind was anchored at a much higher number than usual.

Most people won’t order six types of cheese, but that anchor is enough to move the average up from one slice to two or three pieces of cheese and add a couple extra bucks to each burger. You walk in planning to get a normal meal. You walk out wondering how you paid $14 for a burger and if your date will let you roll the windows down on the way home.

This effect has been replicated in a wide range of research studies and commercial environments. For example, business owners have found that if you say “Limit 12 per customer” then people will buy twice as much product compared to saying, “No limit.”

In one research study, volunteers were asked to guess the percentage of African nations in the United Nations. Before they guessed, however, they had to spin a wheel that would land on either the number 10 or the number 65. When volunteers landed on 65, the average guess was around 45 percent. When volunteers landed on 10, the average estimate was around 25 percent. This 20 digit swing was simply a result of anchoring the guess with a higher or lower number immediately beforehand. 4

Perhaps the most prevalent place you hear about anchoring is with pricing. If the price tag on a new watch is $500, you might consider it too high for your budget. However, if you walk into a store and first see a watch for $5,000 at the front of the display, suddenly the $500 watch around the corner seems pretty reasonable. Many of the premium products that businesses sell are never expected to sell many units themselves, but they serve the very important role of anchoring your mindset and making mid-range products appear much cheaper than they would on their own.

5. Confirmation Bias.

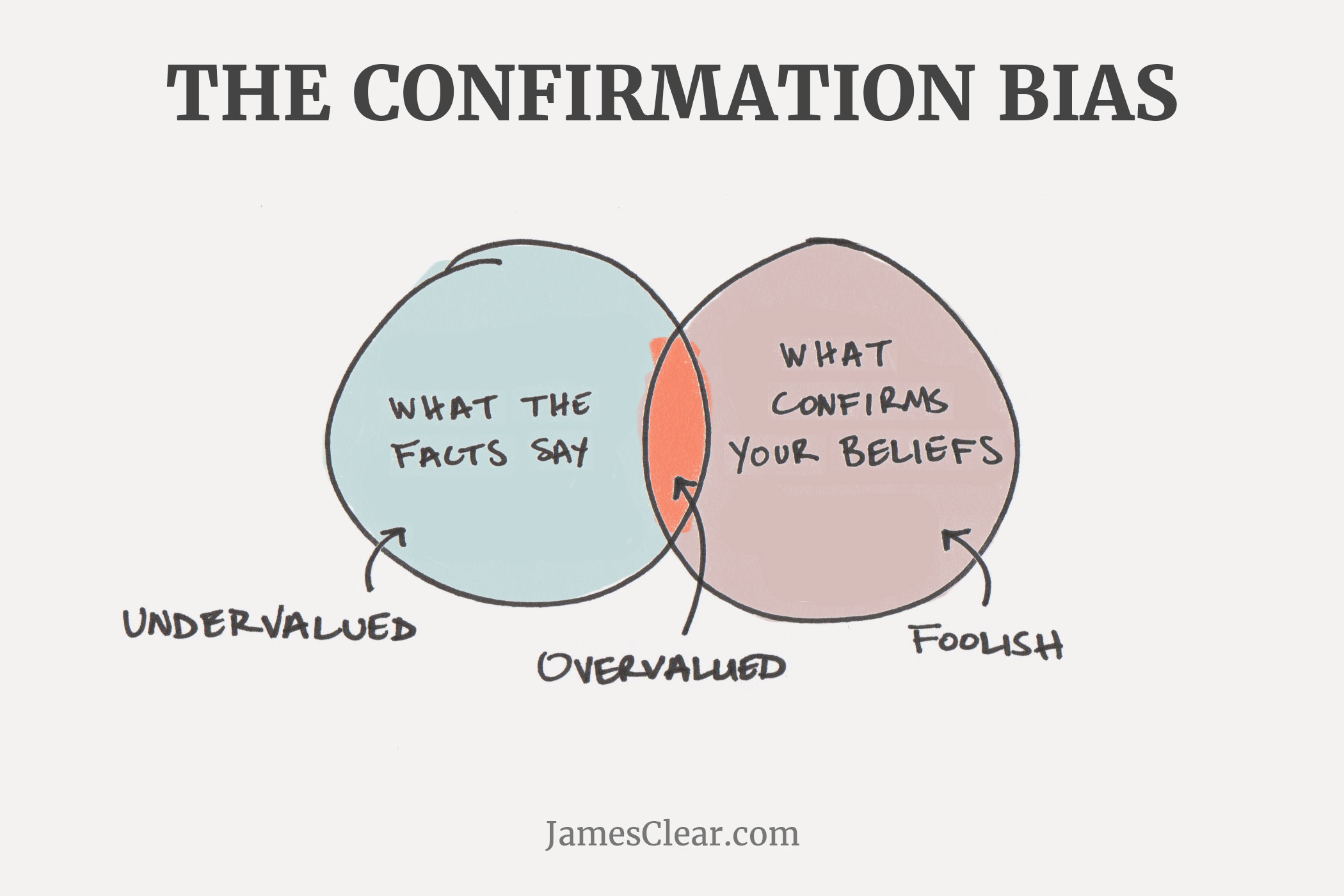

The Grandaddy of Them All. Confirmation bias refers to our tendency to search for and favor information that confirms our beliefs while simultaneously ignoring or devaluing information that contradicts our beliefs.

For example, Person A believes climate change is a serious issue and they only search out and read stories about environmental conservation, climate change, and renewable energy. As a result, Person A continues to confirm and support their current beliefs.

Meanwhile, Person B does not believe climate change is a serious issue, and they only search out and read stories that discuss how climate change is a myth, why scientists are incorrect, and how we are all being fooled. As a result, Person B continues to confirm and support their current beliefs.

Changing your mind is harder than it looks. The more you believe you know something, the more you filter and ignore all information to the contrary.

You can extend this thought pattern to nearly any topic. If you just bought a Honda Accord and you believe it is the best car on the market, then you’ll naturally read any article you come across that praises the car. Meanwhile, if another magazine lists a different car as the best pick of the year, you simply dismiss it and assume that the editors of that particular magazine got it wrong or were looking for something different than what you were looking for in a car. 5

It is not natural for us to formulate a hypothesis and then test various ways to prove it false. Instead, it is far more likely that we will form one hypothesis, assume it is true, and only seek out and believe information that supports it. Most people don’t want new information, they want validating information.

Where to Go From Here

Once you understand some of these common mental errors, your first response might be something along the lines of, “I want to stop this from happening! How can I prevent my brain from doing these things?”

It’s a fair question, but it’s not quite that simple. Rather than thinking of these miscalculations as a signal of a broken brain, it’s better to consider them as evidence that the shortcuts your brain uses aren’t useful in all cases. There are many areas of everyday life where the mental processes mentioned above are incredibly useful. You don’t want to eliminate these thinking mechanisms.

The problem is that our brains are so good at performing these functions — they slip into these patterns so quickly and effortlessly — that we end up using them in situations where they don’t serve us.

In cases like these, self-awareness is often one of our best options. Hopefully this article will help you spot these errors next time you make them. 6

“Loss aversion in riskless choice: A reference-dependent model.” by Amos Tversky and Daniel Kahneman. The Quarterly Journal of Economics.

“The World is Not Falling Apart” by Steven Pinker.

“Availability: A heuristic for judging frequency and probability.” by Amos Tversky and Daniel Kahneman.

“Judgment under uncertainty: Heuristics and biases.” by Amos Tversky and Daniel Kahneman.

“Confirmation bias: A ubiquitous phenomenon in many guises.” by Raymond S. Nickerson

Thanks to Sam Sager for his help researching this post.